Big Industries Academy

6 proven steps to become a DevOps Engineer

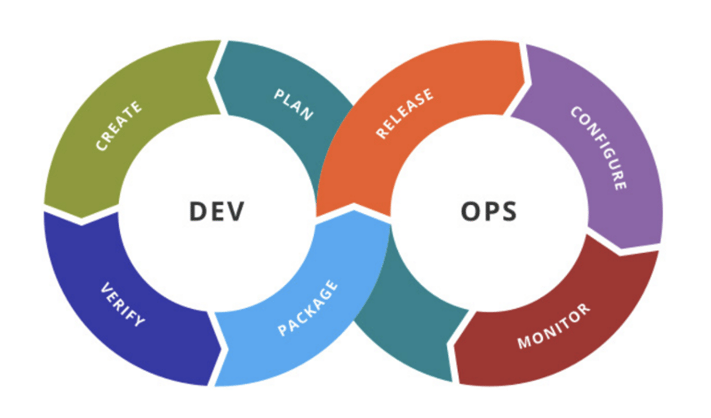

In this blog we describe a number of DevOps skills that every software engineer should acquire to become a confirmed DevOps engineer.

DevOps is a culture that encourages collaboration between business, development, and operations teams, breaking down conventional silos. The main benefit is to build a cross-functional team that understands what each team member does and where any team member can pick up the job of another, resulting in enhanced team cooperation and a high-quality product for the client. Because there are no longer any silos, the amount of time spent transferring code between multiple teams, such as the testing team and the operations team, is decreased, speeding up delivery. Another important idea is to automate everything. This is done to provide clients with a high-quality product by eliminating human errors.

Linux fundamentals and scripting

Linux is presently the most popular operating system because it is more secure than other operating systems such as Windows. The majority of businesses have their environments built upon Linux-based computers. The tools help in the automatic provisioning and management of infrastructure through the use of scripting languages such as Ruby, Python, and others. Many DevOps tools in the configuration management space, such as Chef, Ansible, Puppet, and others, are built on Linux master nodes. Linux fundamentals and scripting knowledge are required to get started with infrastructure automation, a key concept in DevOps.

Understanding of various DevOps tools and technologies

A DevOps Tool is an application that helps automate the software development process. It mainly focuses on communication and collaboration between product management, software development, and operations professionals. DevOps tool also enables teams to automate most of the software development processes like build, conflict management, dependency management, deployment, etc. and helps reduce manual efforts.

Continuous Integration And Continuous Delivery (CI/CD)

A deeper grasp of continuous integration and continuous delivery methods aids in the delivery of a high-quality product to clients at a faster rate. Continuous integration assists in the early detection of integration difficulties, making the developer’s life simpler. Continuous delivery is an extension of continuous integration, in which freshly integrated code is automatically made ready for deployment with little or no human interaction. In the waterfall paradigm, the development team must often submit new code to the testing team, which subsequently moves the project ahead.

It usually takes a couple of days for this to happen. These delays could be avoided if the transfer and testing processes were automated, resulting in code that was ready for deployment quickly. Continuous deployment is the next step in automating the delivery pipeline of an application. This is where the new code is automatically deployed in the production environment.

Infrastructure as Code

In the DevOps world, Infrastructure as Code is the most recent best practice. Abstracting infrastructure provisioning and management to a high-level programming language helps infrastructure provisioning and management. As a result, all of the source code’s functionality, such as version control, tracking, and storing in repositories, may be extended to the application’s infrastructure. The days of manually setting up infrastructure and infrastructure shell scripts are over with the introduction of IAC. A person who understands how to construct infrastructure like code generates infrastructure that is less error-prone, consistent, and dependable.

Security skills

The three pillars of DevOps are speed, automation, and quality. We face vulnerabilities that are introduced into the code at a quicker rate when we raise the speed. DevOps professionals should be able to build code that is resistant to a variety of threats. This has frequently resulted in DevSecOps thinking, in which security elements are included from the start rather than being added afterwards.

Learn and use Cloud Technologies

According to the Amazon Web Services (AWS) website "Cloud computing is the on-demand delivery of compute power, database storage, applications, and other IT resources through a cloud services platform via the internet with pay-as-you-go pricing."

If you have expertise and experience with AWS, Microsoft Azure of GCP, you will be in more demand on the job market.

Source illustration: Data Kitchen

Source text: Sanjam Singh

Matthias Vallaey

Matthias is founder of Big Industries and a Big Data Evangelist. He has a strong track record in the IT-Services and Software Industry, working across many verticals. He is highly skilled at developing account relationships by bringing innovative solutions that exceeds customer expectations. In his role as Entrepreneur he is building partnerships with Big Data Vendors and introduces their technology where they bring most value.