Databricks

Databricks: The Power of Delta Lake and Lakehouse Architecture

Big Industries recently entered into a partnership with Databricks, the leading cloud-based Data and AI platform that enables organizations to unify their data engineering, data science, machine learning and analytics solutions. Databricks helps businesses solve some of the most challenging data problems and deliver innovative solutions faster and more efficiently.

Big Industries recently entered into a partnership with Databricks, the leading cloud-based Data and AI platform that enables organizations to unify their data engineering, data science, machine learning and analytics solutions. Databricks helps businesses solve some of the most challenging data problems and deliver innovative solutions faster and more efficiently.

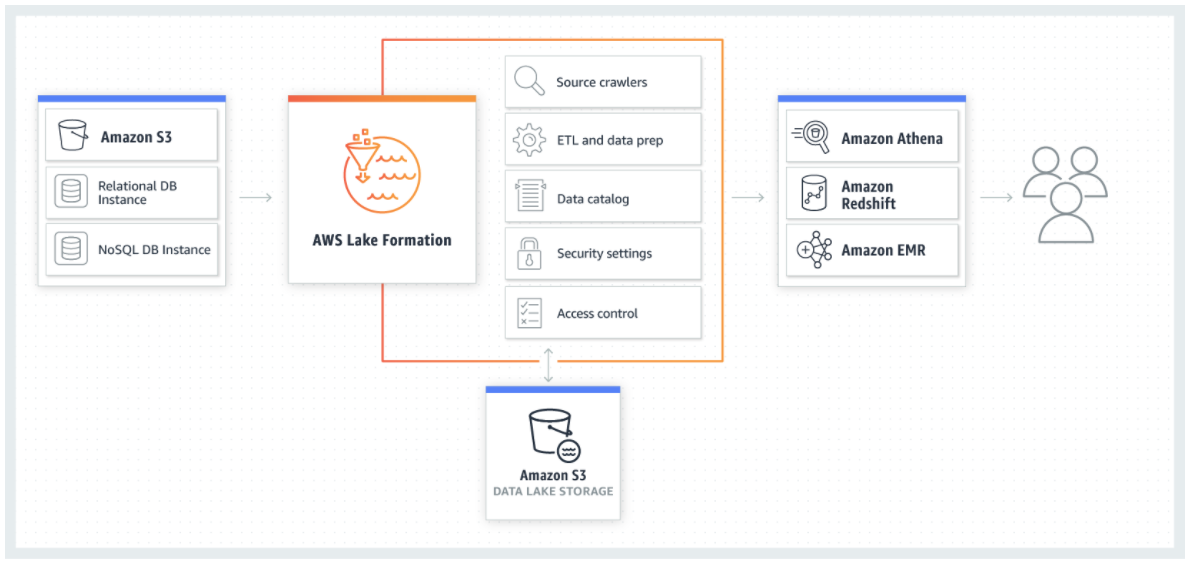

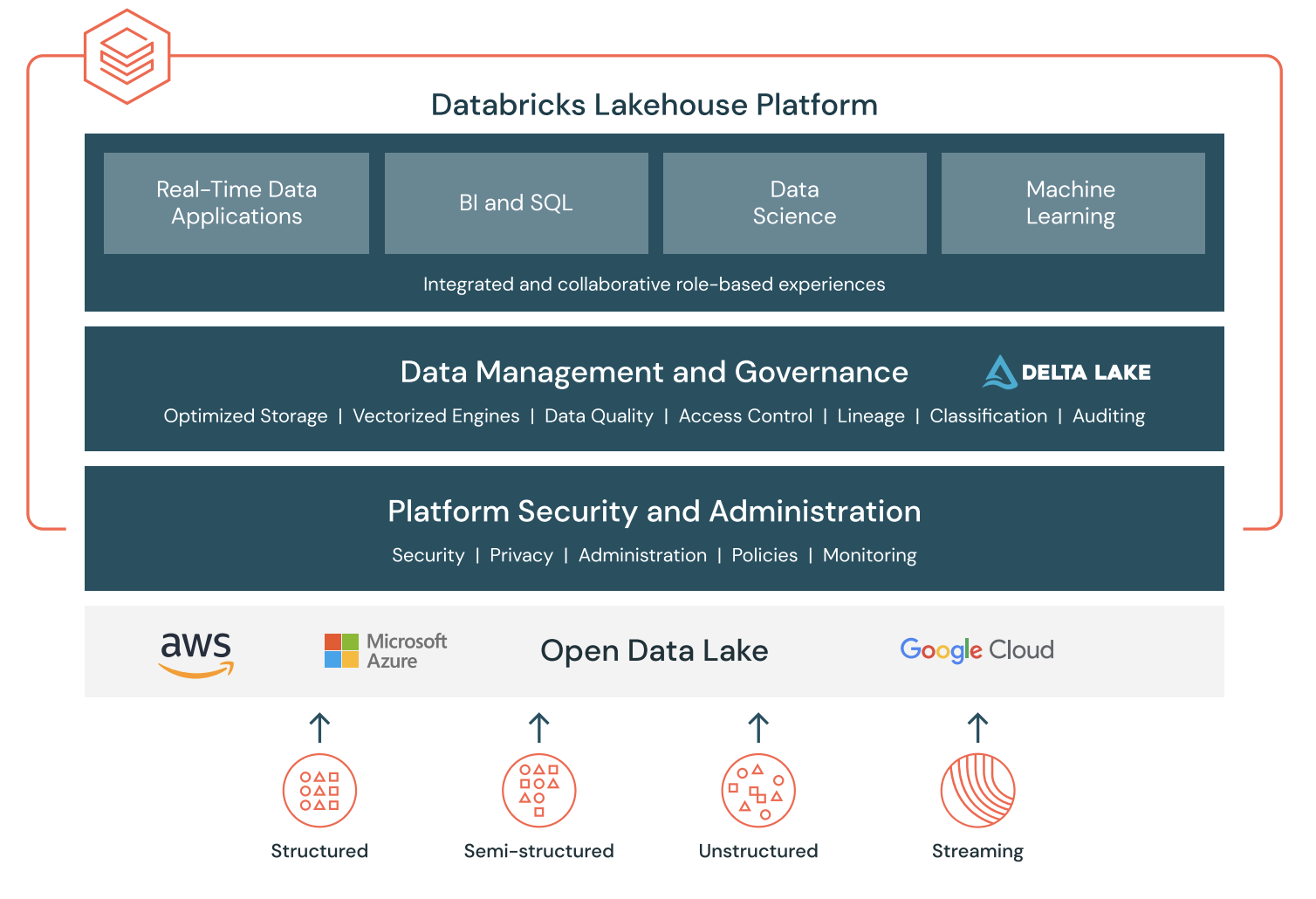

The Lakehouse Platform enables users to unify data and AI across various sources and formats, and scale up or down as needed. Databricks also offers a collaborative environment where data teams can work together and share insights.

What is Delta Lake and Lakehouse Architecture?

Delta Lake is an open-source storage layer that brings reliability, performance, and quality to data lakes. Delta Lake enables users to manage and query both structured and unstructured data with ACID transactions, schema enforcement, and time travel. Delta Lake also supports streaming and batch processing, and integrates with popular frameworks like Apache Spark, Apache Hive, and Presto.

Lakehouse architecture is a new paradigm that combines the best of data lakes and data warehouses. Lakehouse architecture allows users to build data pipelines that can handle both traditional analytics and advanced AI use cases, such as machine learning and deep learning. Lakehouse architecture leverages Delta Lake as the foundation, and adds features like governance, security, and metadata management.

Big Industries and Databricks

Big Industries is a leading provider of data and AI solutions for various industries, such as finance, communications, utilities and public sector. By partnering with Databricks, Big Industries can provide its customers with a secure, scalable, and cost-effective data platform that supports the entire data lifecycle, from ingestion to insight. Databricks enables Big Industries customers to:

- Simplify and automate their data pipelines and workflows, reducing the complexity and overhead of managing multiple tools and systems.

- Accelerate their data analytics and machine learning projects, using the latest technologies and frameworks such as Spark, TensorFlow, PyTorch, and scikit-learn.

- Collaborate and innovate across teams and roles, using a unified and interactive workspace that supports multiple languages and environments such as Python, R, SQL, and Scala.

- Enhance their data security and compliance, using built-in features such as encryption, authentication, authorization, auditing, and governance.

- Scale their data operations and performance, using a cloud-native and serverless platform that automatically adjusts to the changing needs and demands of their business

With Databricks, Big Industries customers can transform their data into actionable insights and drive business outcomes. Whether they want to improve their customer experience, optimize their operations, increase their revenue, or reduce their risk, Databricks can help them achieve their goals faster and smarter.

Matthias Vallaey

Matthias is founder of Big Industries and a Big Data Evangelist. He has a strong track record in the IT-Services and Software Industry, working across many verticals. He is highly skilled at developing account relationships by bringing innovative solutions that exceeds customer expectations. In his role as Entrepreneur he is building partnerships with Big Data Vendors and introduces their technology where they bring most value.