Confluent

Building Real Time Data Pipelines with Apache Kafka

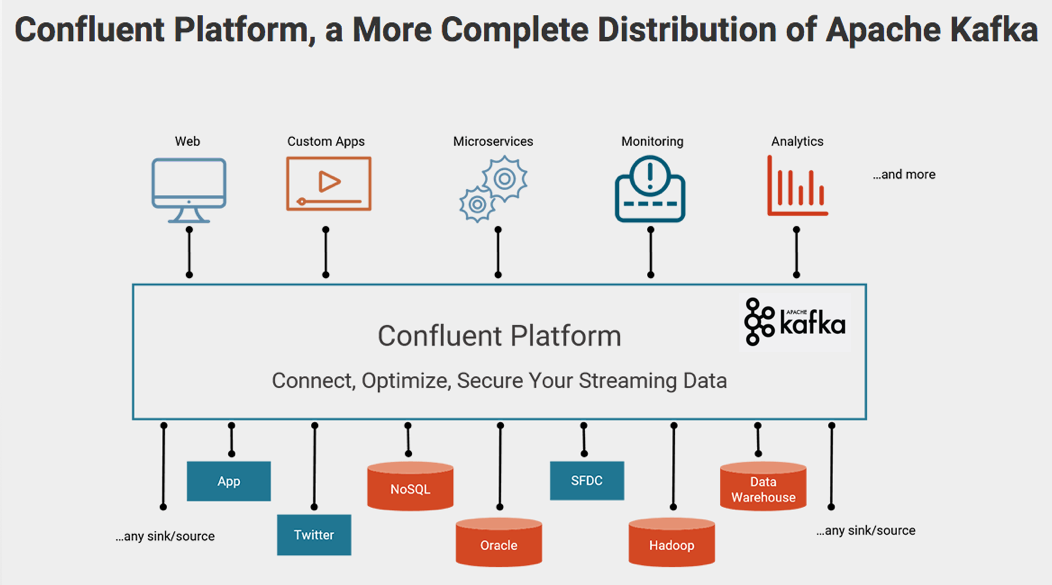

Apache Kafka is a distributed publish-subscribe messaging system that is designed to be fast, scalable, and durable. This open source project – licensed under the Apache license – has gained popularity within the Hadoop ecosystem, across multiple industries. Its key strength is the ability to make high volume data available as a real-time stream for consumption in systems with very different requirements—from batch systems like Hadoop, to real-time systems that require low-latency access, to stream processing engines like Apache Spark Streaming that transform the data streams as they arrive. Kafka’s flexibility makes it ideal for a wide variety of use cases, from replacing traditional message brokers, to collecting user activity data, aggregating logs, operational application metrics and device instrumentation.

Apache Kafka Technology Overview

Kafka’s strengths are:

*High-Throughput & Low Latency: Even with very modest hardware, Kafka can support hundreds of thousands of messages per second, with latencies as low as a few milliseconds.

*Scalability: A Kafka cluster can be elastically and transparently expanded without downtime.

*Durability & Reliability: Messages are persisted on disk and replicated within the cluster to prevent data loss.

*Fault-Tolerance: Immune to machine failure in the Kafka cluster.

*High Concurrency: Ability to simultaneously handle a large number (thousands) of diverse clients, simultaneously writing to and reading from Kafka.

Common Use Cases for Kafka

Log Aggregation

Kafka can be used across an organization to collect logs from multiple services and make them available in standard format to multiple consumers, including Hadoop, Apache HBase, and Apache Solr.

Messaging

Message Brokers are used for a variety of reasons, such as to decouple data processing from data producers, to buffer unprocessed messages, etc. Kafka provides high-throughput, low latency, replication, and fault-tolerance – making it a good solution for large scale message processing applications.

Customer Activity Tracking

Kafka is often used to track the activity of customers on websites or mobile apps. User activity (pageviews, searches, clicks, or other actions users may take) is published by application servers to central Topics with one Topic per activity type. These Topics are available for subscription by downstream systems for monitoring usage in real-time, and for loading into Hadoop or offline data warehousing systems for offline processing and reporting.Activity tracking is often very high volume as many messages are generated for each user pageview.

Operational Metrics

Kafka is often used for logging operational monitoring data. This involves aggregating statistics from distributed applications to produce centralized feeds of operational data, for alerting and reporting.

Stream Processing

Many users end up doing stage-wise processing of data, where data is consumed from topics of raw data and then aggregated, enriched, or otherwise transformed into new Kafka Topics for further consumption. For example, a processing flow for an article recommendation system might crawl the article content from RSS feeds and publish it to an “articles” Topic; further processing might help normalize or de-duplicate this content to a Topic of cleaned article content; and a final stage might attempt to match this content to users. This creates a graph of real-time data flow. Spark Streaming, Storm, and Samza are popular frameworks that are used in conjunction with Kafka to implement Stream Processing pipelines.

Event Sourcing

Event sourcing is a style of application design where state changes are logged as a time- ordered sequence of records. Kafka’s support for very large stored log data makes it an excellent backend for an application built in this style.

Integrating Kafka with Other Components of Hadoop

This section describes the seamless integration between Kafka and other data storage, processing and serving layers of Hadoop.

Matthias Vallaey

Matthias is founder of Big Industries and a Big Data Evangelist. He has a strong track record in the IT-Services and Software Industry, working across many verticals. He is highly skilled at developing account relationships by bringing innovative solutions that exceeds customer expectations. In his role as Entrepreneur he is building partnerships with Big Data Vendors and introduces their technology where they bring most value.